Infusing LLMs and Chatbots with Up to Date Information

What got us here, can’t take us where want to go.

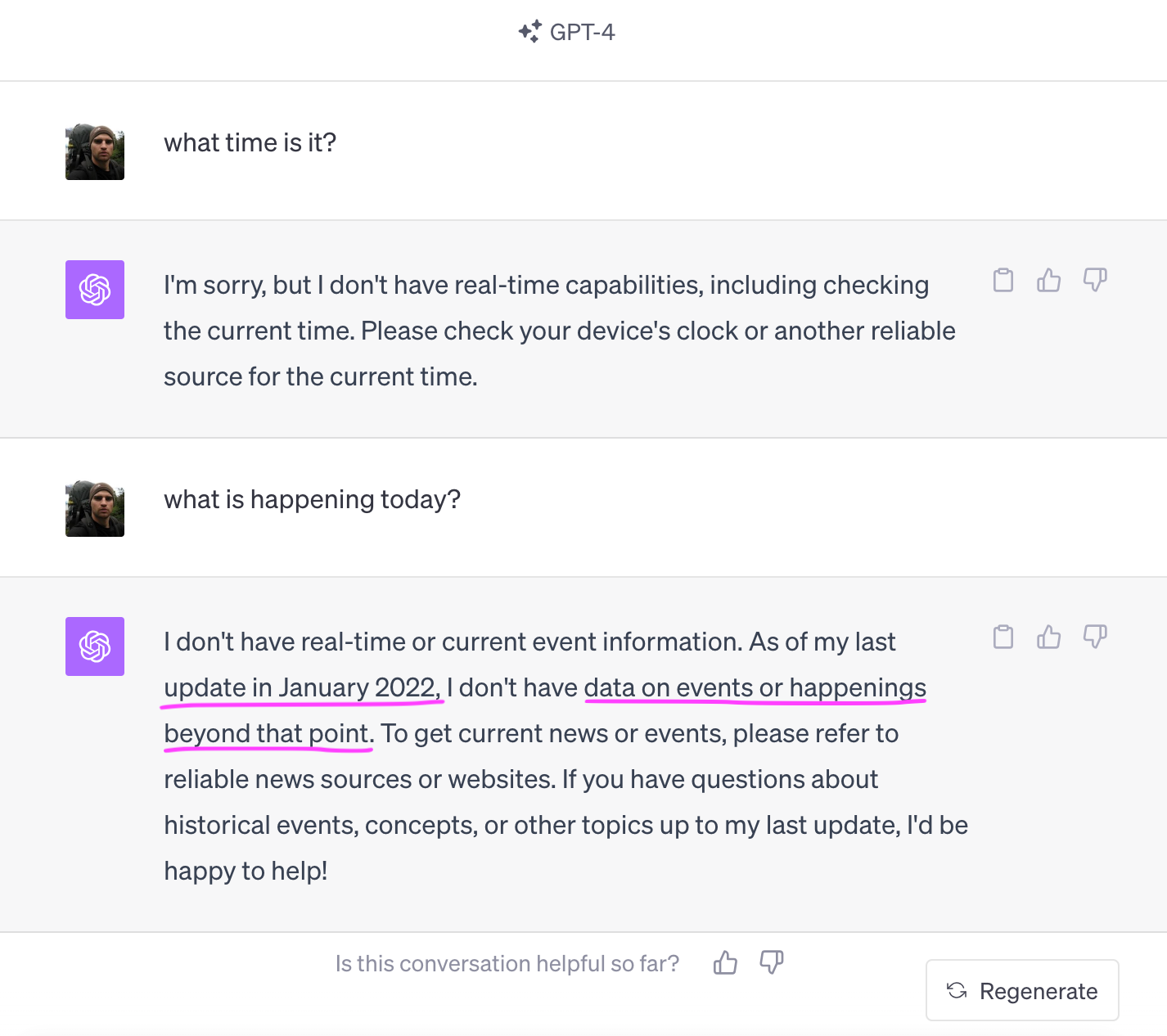

GPT4 and related models are great conversationalists, dropping facts, reasoning, summarizing essays, and even helping touch up our resumes…

The main use for LLMs like GPT, however, or at least a major one, has been the “chatbot” interface. Rather than Googling for things — essentially an information retrieval / database query — you can ask questions. Ask for information, ask GPT to write you a song or design you a website. Now draw you a picture, or interpret a PDF document — such as a long scientific paper that you don’t want to read.

Other platforms are focused on fun conversations with chatbots, such as Character dot AI or some of Facebook’s chatbots recently integrated into WhatsApp — the largest messaging platform in the world.

And yet these chatbots don’t know what happened over the weekend, much less this afternoon.

That’s the thing about news and recent information — the time decay on most information is quite short. A lot of things we say or look up — is relevant right now, and may be of little value in twenty minutes, much less hours or days from now. The obvious example is sports scores and top plays, but this applies to many other things besides.

I’m sure you can think of countless examples in your life where you’d want an intelligent agent to be more aware of what’s going on right now than you are.

And yet why are these powerful LLMs months if often not years behind the times?

Random access memories

LLMs like GPT are trained on a “snapshots” of the internet. Their training data is millions of documents, billions of tokens, basically filling in the blanks for “good” docs on the internet.

That is how GPT learns everything it knows — in one continuous process — from facts, to song lyrics, to logic and grammar.

Chatbots like OpenAI’s GPT4, Facebook’s LLaMA and HuggingFace’s open source Zypher 7B are further “finetuned” on human conversations, to operate better than “raw LLMs” as context-preserving Q&A agents that we love so much.

Why can’t these models be constantly retrained on new information?

Models like GPT take months to train from scratch. Smaller model train in days or maybe even hours — especially if these are already off to a good start.

I don’t doubt that people are fine-tuning some of these “foundational models” on recent information to fix recent facts and inject new ones. But the models were… not designed for this — they expect to get a huge dataset of randomly sorted, pretty accurate information. You can certainly favor recency… and design a learning curriculum to emphasize that information in the model.

But we’re still talking about modifying a large model, with every little fact. And good luck getting that update in real time — or even say 20-60 minutes behind the times. And if you did… you would be spending a lot of effort modifying the core model with rumors and noise, only to undo much of it an hour later.

I do think that big, foundational models will get a bit more frequent updates than “as of 18-24 months ago” as GPT has mostly been releasing. But it’s not a solution for the model giving you answers and reasoning about Halloween 2023.

There are two ways for your LLM / chatbot to know what’s happening recently.

update / fine-tune its parameters

offer the AI extra information

This is also how we answer questions. Either from our knowledge / memory [2+2=?], or we look it up… and then still use our brains to process that additional context.

Not only do we know how to blend our internalized priors with “book knowledge,” we also know when and how to look things up. But it does take a bit of effort…

Of course this sounds a lot like a Google query. Actually, there are two queries here, which a pre-GPT chatbot like Amazon’s Alexa or Apple’s Siri might consider

direct API — like for weather, sports scores, stock market prices

very accurate, but super specific

Wolfram Alpha, is perhaps extended version of that

text-based Google query — get the Google results, and try to summarize those somehow

For Robots, by Robots

The problem with turning every live question into a Google search is that we like to ask super vague questions like “what happened in sports over the weekend” or “is there anything happening in my town?”

Actually we want to know these things without even having to ask. That’s what TV is for, to some extent. But let’s put the implied questions aside for a future blog post.

Often times we want specific, short and timely importance-weighted answers to pretty general questions. Then we see something that interests us and ask “tell me more.”

Rather than counting on Google to answer such questions, we take a different approach.

DeepNews: AI-Driven Journalism and Realtime News Archive

Large language models (LLMs) like ChatGPT do amazing things. But they don’t know what happened over the weekend. There have been attempts to “fix” this by injecting recent data to the LLMs’ context, usually via search results. But this is not a smooth or native experience.

As described in detail earlier, with DeepNews we do something like this

collect all the information fragments in realtime from 20,000+ sources

organize them by pockets of information, stories if you will

determine what’s novel, compelling and fits into a high level topic

write a summary, tag named entities, sources, etc

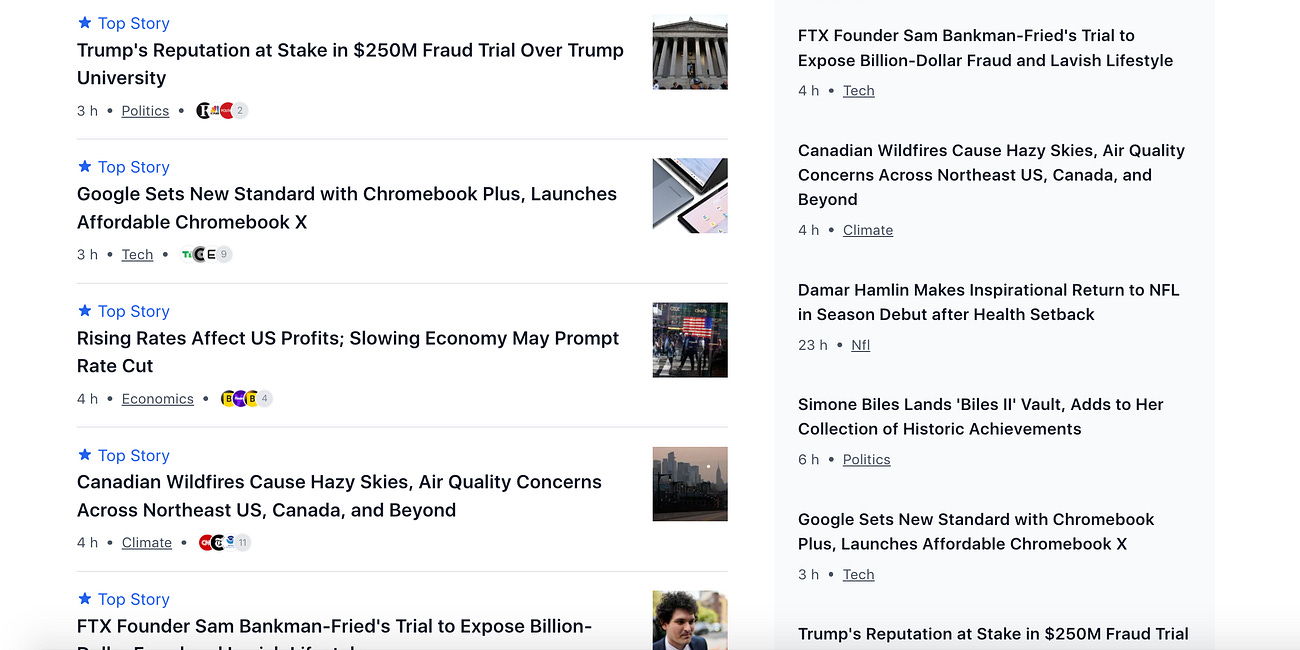

Instead of your vague and fuzzy recent-information query generating a cascade of Google queries, it can now be applied on a compact, timely, pre-ranked “live database” of recent information.

In the parlance of LLMs, we compress all of the chatter on Twitter, Telegram, etc — into something like 2,000 self-updating stories per day. We are expanding to 10,000 stories with more local and non-English language support soon.

Stories are ranked by importance, and as they are linked to entities, sources and related stories, it is also not hard to ask “tell me more” which expand your query to something like a BFS from a story, entity, or high level topic. That greater bit of information can also be summarized by the LLM. You can get as much information as you want, without having to manually click on a dozen links and copy-paste those results into ChatGPT. Computers are good at following links, and LLMs are good at summarizing large amounts of repetitive information.

For Humans too

You can see DeepNews live, right now, on our website but with our channels on Telegram. We try to keep the Telegram channels not too noisy, so we only post the bigger stories, and we don’t re-post when a story updates, unless it’s a major change.

Check us out.

Responding to what early users have been telling us:

we are adding more niche topics like sub-niches in tech and crypto, local news (besides San Francisco, which we already have)

different levels of personalization — coming soon — many of you don’t want to see sports or politics, but do want to see minor stories in they niches you like — we get that

Overall, we think that this kind of live news curation and “compression” in realtime, will have a few useful form factors. Both for humans — on the website, our (future) app, on WhatsApp and Telegram — as well as a live information indexed and well-tailored for chatbot LLMs.

We will have a chatbot interface on our site soon. And we’d like to offer our stories as an API to other chatbot makers.

On top of that… we will also be improving our stories of course. Recently we’ve focused on speed, accuracy and efficiency of writing — as well as training models to detect what is a good, timely or novel story. As we get more user feedback, we’ll focus a bit more on our generative models and topic assignment. As well as adding a magnitude more sources and stories — going into more niche spaces.

Stay tuned, we’ll be updating more soon.