DeepNews: AI-Driven Journalism and Realtime News Archive

Curating live news with large language models (LLMs), for journalists, traders, sports fans, and everyone in between.

Large language models (LLMs) like ChatGPT do amazing things. But they don’t know what happened over the weekend.

There have been attempts to “fix” this by injecting recent data to the LLMs’ context, usually via search results. But this is not a smooth or native experience.

Beyond LLMs, recency has long been a problem in information retrieval. I remember being on the Google Search team circa 2006 and many of the projects involved news injection to Search results, measuring what documents contain novel information, ways to fix Spelling Correction to faster adjust to changing language and new “named entities,” and other ways to preference fresh content. Because that’s the thing — older content has more links and social proof. It’s “better content” according to most algorithms, but people want the fresh new thing.

Their inability to integrate fresh information is one of the LLMs biggest weaknesses. If you ask the LLMs what’s the score in the NBA game, it could run a query and tell you. If you ask “what’s up in tech news today?” it simply can not tell you, even with billions of parameters at its disposal.

Rather than solving this problem with one-offs and search results — the twenty year old tech that LLMs are supposed to be replacing — we went all the way and build LLMs to gather, rank and ultimately write the News. We call it DeepNews.

DeepNews is the first fully-automated LLM based newspaper, covering all the news you want to know, in realtime*.

DeepNews has been live for several weeks now, we have a group of loyal users, and I personally find it much better than alternatives for keeping up with the news, in a few specific ways.

DeepNews writes all its own stories. We do not summarize articles — paywalled or otherwise. Therefore we can cover stories not yet in major publications, or even on prominent blogs. We can be fast, with headlines that are optimized for the user, not clickbait encouraging you to read an article. Our product may compete with article summarizers and news link aggregation, but that is not what we are doing.

Some simple things are had for LLMs — like writing a coherent sports game recap. But some hard things are easy. Deciding which stories to write and writing them can be difficult. Having he LLM generate the ideal headline, the perfect text for SMS notifications, the best text prompt for a text-to-voice reading of the headlines is something the LLMs can do well. Not least because our model wrote the story.

Lastly, curating the news in realtime — all the news that matters, for a couple dozen major topics — gives you an interesting dataset. What happened in Tech or Sports a week ago? We also have the archive by named entities and sub-topics. Oddly, neither Google nor Twitter have good data for this question. Yes they have more data — too much data. If you want to know about the world, in a comprehensive but fairly data-efficient way, we reduce it down to a couple thousand “news stories” per day. Comprehensive, index, and organized by topic, entities, and importance.

Speaking of datasets, we also can track which “small stories” became bigger stories over the following days. We look forward to training models on this dataset, predicting from the first pass, which small stories are likely to develop into bigger stories. Well before CNN or the NYTimes picks up a story like “SBF arrested” or “Mud at Burning Man.”

How it works

In simple terms, we use AI to

figure our what people are talking about

write the best stories we can about these topics and entities

determine if each story is newsworthy, and how important it is

More specifically, we ingest tweets from ~20,000 top accounts across dozens of topics, run clustering algorithms on the tweet embeddings to get story candidates, then use LLMs to write original stories as well as rank how newsworthy a story might be.

Clustering the tweets by their embeddings turns out to be a pretty good, compute efficient way to discover “there is a possible story about X.” For those not familiar with sentence-level embeddings, it might be surprising how effective these are for grouping text together along named entities, events and common [but not identical] phrases. Including for names and phrases that the models could not possibly have seen before.

The primary value in aggregated tweets is a great proxy for “there is something new a about X.” Although many “clusters” are not news worthy. For example Crypto users wishing each other “GM” or sharing birthday greetings. Or just fans cheering for a player or team with no context. We use LLMs to rank whether a story is “great,” “good” or “bad” / “not a story.”

Lastly we have LLMs that write the story body and headline. Unlike news aggregators, these are original stories, based on Twitter sources, but also prior information and other information given to the LLM as context.

I’ll skip the technical details, perhaps best for a follow-up article.

In practice we find that following just 20,000 top “newsy” Twitter accounts, it is *impossible* for us to miss a major news story. It is possible of course to miss a minor stories, and to write some non-news or to have a bit of duplication.

But if a meteor strikes Miami it would be impossible for us not to get the story quite quickly. If there is “Mud in Burning Man” we will get it, before CNN does. If there’s an arrest or a major hack in the Crypto world, we will have it. This speaks to the robustness of Twitter as a data source, as well as to the power and flexibility of LLMs.

Over time, LLMs give us the freedom to improve writing (and information gathering) in a flexible way. More on that later.

The hard part here is

a minimum viable pipeline for information gathering

a high-recall process for story candidates

writing good stories and filtering out non-stories

doing this in realtime

We have been doing that live for a few weeks now, and have continuously been shipping improvements, focusing on speed, story quality, accuracy, and discoverability. For you as the reader, it should be as simple as visiting our site and seeing all the news that’s fit to read. Browsing headlines, and clicking through for more details.

What it looks like

We ingest tweets from ~20,000 accounts. Of these, about 8% make their way into story clusters. As you can see the resulting volume ranges from 10,000 to 20,000 tweets per day. Content dips on the weekend, less so during the NFL season.

We have up to 400 distinct stories about the NFL alone, peaking as you’d expect on a Sunday. NCAA (mostly college football) peaked at 200 stories per day during the college football opening weekend.

Boxing / UFC stories peak when there’s an event or in the aftermath.

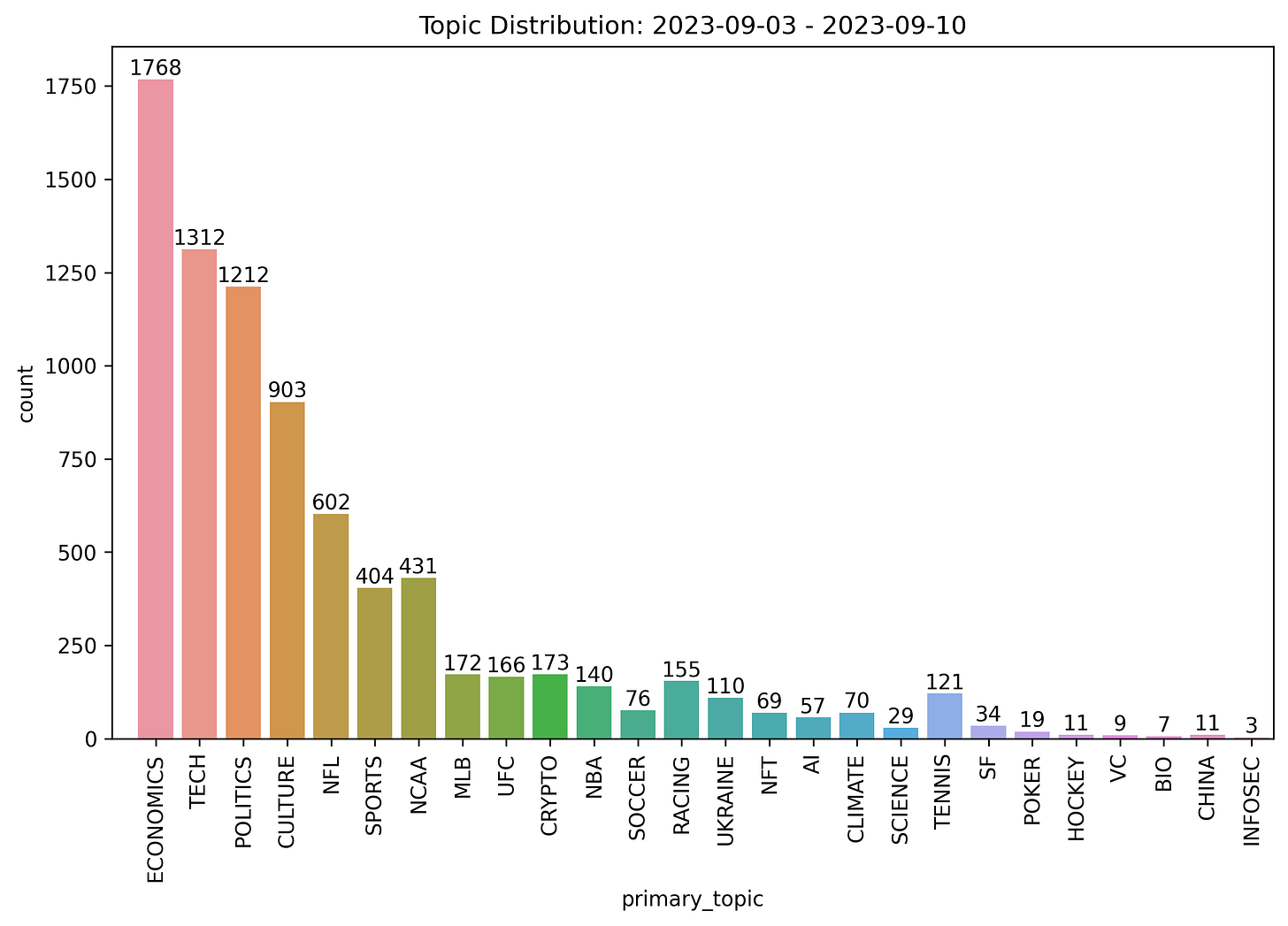

We cover two dozen “primary topics” from Tech and Economics to Sports and NFL to Tennis, Climate, Ukraine War and San Francisco / Bay Area.

Over the course of a week we cover about 10,000 unique stories, about 1,000 to 2,000 stories per day.

The distribution of topics is fairly consistent week to week but does include some variation. For example Tennis stories ticked up during the US Open.

So there you have it

We cover two dozen major topics

20,000 Twitter sources

8% of their tweets end up tied to news stories

10,000 to 20,000 tweets per day

10,000 stories per week

Biggest topics are Economics, Tech, Politics, Culture, Sports and NFL

We will add further sub-categories for some of these soon

Who is this for?

This is not News Aggregation. Our stories are original stories, written by AI. We do not wait for NYTimes or WSJ to write an article. That said we understand that News is not a greenfield. People have their ways of getting the news. Including trawling Twitter and Telegram, setting notifications for select accounts, etc.

Everyone follows the news, including people who claim that they don’t care about it. We think that DeepNews will be most useful for a few kinds of news readers.

people who use Twitter — daily or sometimes — and are looking for a more efficient way to catch up on what’s going on

people interested in niches like Crypto, AI, and Science and find it hard to get that information without their feed being encroached by unrelated topics

people interested in trading markets, who want to follow Economic news, as well as news about specific companies or crypto, in close to realtime

As a former quant and current Twitter addict, I understand the pain of noisy news feeds and endless scrolling.

Suppose there is a major Crypto news story like an FTX executive arrested. We will have the story. We will have it at the top. But we won’t show you the story 200 times which is what you’d see in a crypto-focused Twitter feed. In our feed you can read the headline, scroll past the story and find out everything else that’s going on also.

The value of our product to the trader and news junkie, is contingent on

being fast — as fast as Twitter and Bloomberg, faster than NYTimes, WSJ and CNN

being thorough (in terms of not missing important and semi-important stories)

writing good headlines — as the headline is all you want to know 90% of the time

minimizing noise and repetition

With GPT4 and our own trained relevance models, what we offer now is quite good, and will be getting a lot better. The tailwind of LLM improvement is at your back.

Realtime internet archive

Imagine asking your conversational agent “what’s happening right now in Sports?” — much less “what happened yesterday?”

Companies offering personalized chat bots, from Character.AI to Facebook to startups in the latest YC batch, are focused on the human-robot interaction, not with curating the news. When you ask Meta’s “chatbot Snoop Dogg” what’s happening in Crypto, this will trigger a Google Search. However Google has never been particularly well suited for answering that question. Much less being told about SBF, and the user following up with “I already know that, tell me about something else.”

Chatbots and LLMs like GPT4 are well-tuned to answer questions about information from five years ago. This can be scaled to a year ago, or maybe even a month ago. But assumptions start to break down once you want to know what is relevant *right now* — minutes or hours ago. Or even what happened last week, and is still relevant now.

Yet as chatbots and LLMs enter our lives, asking about what’s happening now will be a big chunk of peoples’ interests. It always has been.

Going back to how this piece started — LLMs are amazing, taking over the world, but have a few major weaknesses. A big one is no direct access to recent information. We offer the fist AI-focused solution to this problem.

Not only is our news coverage live, machine written, fast* and comprehensive. It’s ranked, de-duplicated, filtered, NER-tagged and ready for consumption not just by readers like you but chatbots and other LLMs.

Reach out to us if you’re interested. Public API coming shortly.

Q&A

Q: Are you looking to replace Twitter/X?

A: No. Despite occasional efforts to define Twitter around news and current events, Twitter is many things.

This is why Twitter “Trending Topics” don't really work for news discovery. Anything could be trending on Twitter, some of it is fun or magical. Much of it is not news. And it’s far from comprehensive.

We could use our AI to highlight specific great tweets, and we may do this. But for now we are sticking to the Twitter feed as a news source. Even though 90%+ of tweets we read don’t end up attached to a news story.

Q: Are you looking for help?

A: Yes. We are a small five person team. Big enough to build and operate this, but we aren’t good at everything. For example, we’re not bad at product — strong for a machine learning focused team — but this isn’t our A+ skill. Some of you have built consumer apps and websites, and have a keen sense for the users experience. Please reach out to us.

Q: Are you writing stories with GPT4?

A. Yes. Although with our volume of stories, most are still being written by GPT3.5 — which is faster and cheaper. We will have more and more of the stories by GPT4 over time. Especially as GPT4 is much better than GPT3.5 at following custom instructions, using extra information, and voice / formatting. Stay tuned.

Q: Can you cover my niche topic?

A: Probably. This is a common request. We believe our work will scale from the biggest stories and topics to more niche ones. Whether that’s AI startups, science and biotech, new developments on the blockchain, local news, and so forth.

Q: The stories don’t look quite realtime enough. Can you be faster?

A: Yes. Our major focus is driving the story generation to realtime, on par with Twitter, Bloomberg and other fast news sources. The data processing and machine learning pipelines currently introduce delays, but these will be ironed out. Our aim is absolutely to be in the moment — while giving you a good way to see previous stories about a topic or entity as well. Curating the news, in realtime.

Closing thoughts

Check out DeepNews! Let us know what you like and what can be improved, in the comments below.

Most of the feedback we’ve gotten so far has centered around covering more niche topics, and being more realtime. Expect big improvements on both.

We’ll be in San Francisco for the week of OpenAI’s Developer Conference on November 6th 2023 — please reach out and say hello.

As mentioned on Craig Jones’s El Segundo podcast, reach out if you’re a developer or otherwise smart technical person interested in generative AI, realtime data, or otherwise curious about joining the team. We’re improving the product rapidly, and will be at this for a long time.

Nice! With your in-progress API, will I be able to customise DeepNews to a more Brit-focused news agenda?

Interested in the API. Would love to chat. How can we contact you?