Regulating AI by the FLOPS: What Does it Mean?

Making sense of the Biden White House executive order on AI, AGI risk and regulation

The story dropped on Monday, October 30th. From DeepNews:

President Biden Signs Sweeping, First-Ever AI Executive Order to Cut Risks and Boost US Tech Talent, National Security

October 30, 2023 at 06:10 PM (UTC)

President Biden has signed the U.S. government's first-ever, sweeping and wide-ranging executive order to expand the government's capabilities in monitoring and cutting AI risks. The order aims to boost US tech talent, prevent AI from being used to threaten national security, and develop safety guidelines. It builds on non-binding agreements with AI companies and sets new rules to make AI safer and more secure. The order also focuses on protecting privacy, civil rights, and supporting consumers, workers, and students affected by AI. It is seen as a significant step in ensuring the US remains at the forefront of AI development while addressing potential risks.

Interesting, maybe even significant — certainly a bit historic — but not earth moving, perhaps.

As people started reading the Executive Order details, and posting about it on Twitter/X starting Tuesday morning — well, it seems like a big deal now.

Follow along, as I show the key tweets it today’s discussion, break down what it means, and what the sides of this argument have been. The best, most representative words from all sides — hopefully I curated those more or less correctly — and some technical explanations for those of you who may not know what a FLOP is, or how big and scary are these large language models (LLMs) really. Happy Halloween 🎃

The first analysis tweet I saw this morning, retweeted by the legend John Carmack. A good entry point:

the Executive Order is aiming to regulate AI models

models are identified by size / complexity

“big” models need to be registered with the government

once this is normal, the agencies can of course change these definition

John Walker notes the comparison to the AMT (alternative minimum tax) in a fuller blog expanding his tweet

no such regulations existed before this week(*)

tech pioneers like John Carmack are mad (and a bit heartbroken perhaps) about the government stifling innovation

Most likely you are wondering — how big is this 10^26 model size anyway. Are currently models like ChatGPT bigger than that, or no?

Soumith Chintala, co-founder of PyTorch explains:

Facebook’s 70B parameter model is ~5e24 — one order of magnitude away from falling under this regulation

GPT-4 is probably already past the regulated size of 1e26

The regulation isn’t about model size, it’s about the total machine “effort” spent training the model — so you can exceed it by training your model for too long

The rules came in at right about the level of the best known models…

Hence the regulation also applies to “AI clusters” — GPUs wired together to train big models. You gotta let the government know if you build one.

By approximation, the rule was also drawn to bring in the top of what’s currently available.

As relevant and exciting as this FLOPS analysis is, I think it suffices to say that the White House knew what they were doing. These numbers did not come out of nowhere.

All of us not running a huge cluster or training GPT-4 don’t have anything to declare, and it would be hard for the USG not to know about someone training a model bigger than GPT-4, honestly.

Most of us could not stop talking about our big models anyway, and now Google, OpenAI and a few others have to register their model training capacity with the federal government.

What is there to worry about?

Later this morning, also from DeepNews:

Google Brain Cofounder Andrew Ng Warns Big Tech Companies of Spreading Fear about AI to Gain Control

October 31, 2023 at 10:38 AM (UTC)

Google Brain cofounder, Andrew Ng, warns that major tech companies are spreading fear about artificial intelligence (AI) to gain control over the industry. Ng believes that the idea of AI leading to the extinction of humanity is a lie being promoted by big tech in order to trigger heavy regulation and shut down competition in the AI market. This comes after Google executives downplayed the company's AI position during testimony at a federal antitrust trial.

Needless to say that got some reactions. Geoffrey Hinton enters the chat.

Those represent the two main camps in this argument

“AI is good” / “risk of catastrophe is not great”

“young people and new companies should not be prevented from using the best technology”

Andrew Ng, Yann LeCun

many VCs and business people

libertarians

“AI risk is not worth it” / “we need to monitor, slow down, and be ready to shut down rapid AI expansion”

Geoffrey Hinton

AI risk philosophers, many intellectuals

AI Safety researchers (not all)

Geoff Hinton not only left Google over disagreements (presumably about the risk of AI) but when at Google, but he gave up a role that reportedly paid him millions of dollars a year, a very high status position, and access to a private jet (to help with his bad back). No one could claim that he isn’t sincere about his concerns.

On the anti-regulation side you have three main types of arguments

freedom and liberty arguments — “code is speech” / "let the kids build

AI risk people are self-absorbed or have ulterior motives

regulation is part of a game to hurt some (OpenAI? new startups?) and benefit others (incumbents like Big Tech who work with regulators)

Prominent VC David Sacks represents the pro-freedom, pro-business side. And he is right to some extent — the US software market is highly unregulated. You don’t even need a license or a degree to be a computer programmer. Yet this is the best performing sector of the US economy.

Mark Chen from OpenAI — perhaps represents a more cynical view. To much pushback…

It’s easy to shoot such statements down. Beyond a clear definition of what “regulatory capture” means… as someone in the AI space, I know that views are mixed on AI safety.

Within OpenAI, there are surely people with Mark’s view, but also many with concerns about AGI causing massive human harm, or at least an intellectual interest in exploring these ideas.

Overall as I said on

podcast — I still think that most technical people working deep learning models are broadly pro-growth and anti-limitation. However all of us will entertain an intellectual discussion about AI “getting out of hand” and we know very smart people in our circles — mostly not AI developers, but still — who really care about this issue and are in the broadly pro-caution camp.It would probably ruin your social life, as an AI developer, to not be at least open to the idea of some AI risk. Unless you take the strong, totally pro-AI view and don’t look back. Most people don’t aim to be that confrontational.

If you work in AI people will ask about AI risk. All the time. Especially at parties. Especially at good parties.

As AI becomes closer to general compute and a default way of solving various technical problems, will this push for more push-back and slow-down mechanisms, or will more and more developers not want limitations on what they are building?

Andrew Ng — ever the gentleman — wrote a longer post to try and square the circle on AI risk.

Laws to ensure AI applications are safe, fair, and transparent are needed. But the White House's use of the Defense Production Act—typically reserved for war or national emergencies—distorts AI through the lens of security, for example with phrases like "companies developing any foundation model that poses a serious risk to national security."

Yes, AI -- like many technologies such as electricity and encryption -- is dual use in the sense it can be used for civilian or military purposes. But conflating AI safety for civilian use cases and military applications is a mistake.

It’s also a mistake to set reporting requirements based on a computation threshold for model training. This will stifle open source and innovation: (i) Today’s supercomputer is tomorrow’s pocket watch. So as AI progresses, more players -- including small companies without the compliance capabilities of big tech -- will run into this threshold. (ii) Over time, governments’ reporting requirements tend to become more burdensome. (Ask yourself: Has the tax code become more, or less, complicated over time?)

The right place to regulate AI is at the application layer. Requiring AI applications such as underwriting software, healthcare applications, self-driving, chat applications, etc. to meet stringent requirements, even pass audits, can ensure safety. But adding burdens to foundation model development unnecessarily slows down AI’s progress.

While the White House order isn’t currently stifling startups and open source, it seems to be a step in that direction. The devil will be in the details as its implementation gets fleshed out -- no doubt with assistance from lobbyists -- and I see a lot of risk of missteps. I welcome good regulation to promote responsible AI, and hope the White House can get there.

3:02 PM · Oct 31, 2023I don’t know if this measured approach will work.

Channeling Nassim Taleb’s “Minority Rule” a small group that cares about an issue can set a global policy that disagrees with the majority view, if the majority doesn’t care nearly as intensely.

That is an argument for being unreasonable. And most developers just want to get along, or maybe just get back to coding.

When Geoff Hinton left Big Tech to talk about AI risk, he may have left tens of millions of dollars at the Googleplex, but he acquired an army of of credibility among AI alarmists (apologies for the judging words) and also among mostly non-technical people with big brains, and intellectual interest in this issue [and no particular personal stake in coding big models].

Although Hinton freely concedes that AI has the potential to make lives a lot better (as well as the >0.1 chance to make lives much worse) — his biggest support comes from people who make concern about AI risk a part of their intellectual identity.

As folks point out (I’ll stop with the quoted tweets) these Biden White House AI rules were set by people with knowledge of how deep learning models work. Unlike some government regulations, these were written by people who understand the industry. Conspiracy or not (I vote a soft “no”) these rules are a substantial first step in preventing the development and use of some large deep learning models.

Even if the specific rule has limited power — the folks who’ve pushed for such rules for some time, today will feel empowered. Their side is suddenly winning.

I can’t think of a sincere, calm, measured and moderate way to push back against that momentum…

Needless to say I am against the thrust of such regulations. Even though I don’t think they will stop or even slow down AI progress.

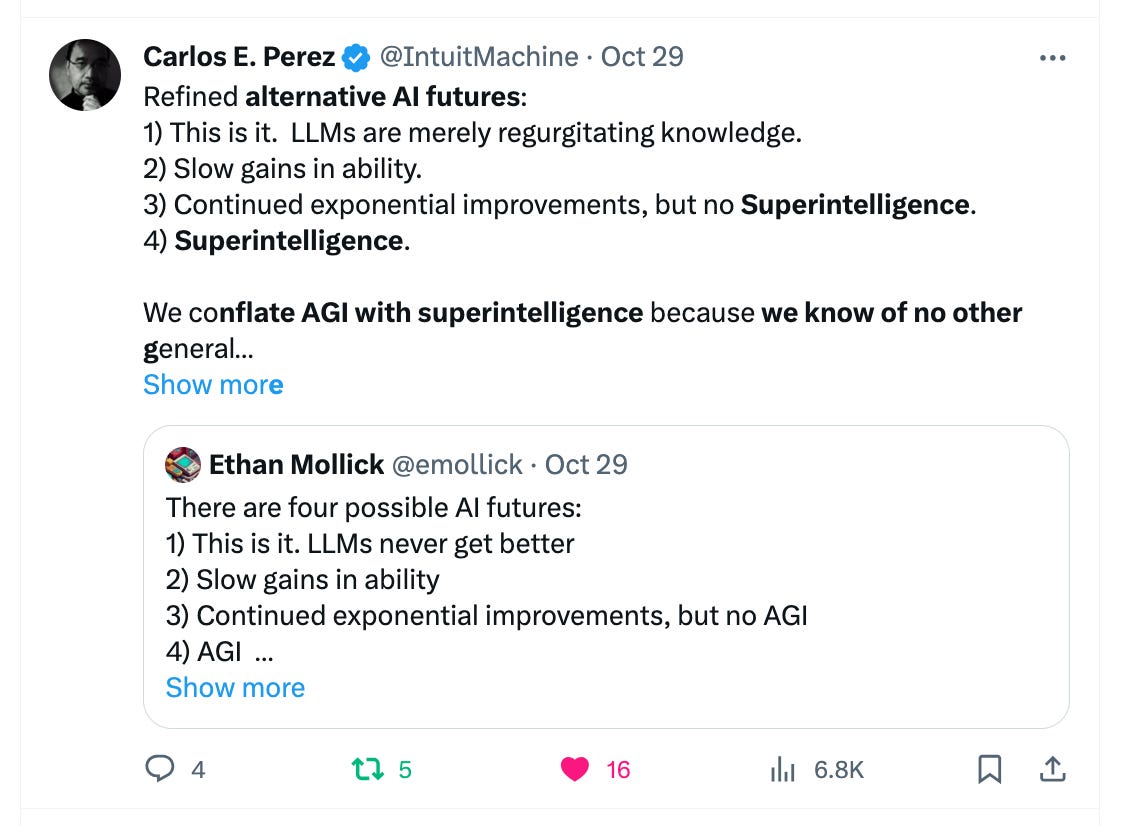

The biggest models like ChatGPT with GPT4… are still not all that powerful. They are decent summary and mimicry engines, and that is useful. Perhaps they are a bit more. We are far from superintelligence.

They will definitely keep getting better. I can’t wait for GPT-5. Whether I have to scan my fingerprint to use it or not…

On a personal note, I’m in San Francisco for a week or two. Mainly here to meet with AI people. If you have elite AI-event recommendations, let’s meet there.

Going to AI events and meeting young founders has been fun. I love hearing what they are working on — shared with a fellow technical founder, not an elderly investoooor….

Here’s my biased, small sample summary

tremendous number of young developers (and some non tech folks) want to build demos / startups around LLMs

skews extremely young

very technically minded

“AI as general compute” thesis sounds right

the project tend to cluster on a few ideas

image generation for content / advertising

text understanding / summarization for large documents / private datasets

automated “logic flows” for semi-repetitive task automation

also LLMs for code completion… but those folks don’t really go to events — they are home coding

Some projects are focused on solving specific business problems. Others are building general purpose demos like “ask a chatbot questions about any document.” The split seems pretty extreme here. In a sense you have two people — those who see business problems and want AI to help. Others see a cool way to have AI connect to something. Both of these approaches can arrive at the same conclusions.

Overall I’m excited and a bit surprised to see the popularity of LLMs. We’ve all used ChatGPT and gotten excited. Yet LLMs don’t have much impact on our lives yet. Except where we aren’t looking under the hood, such as in some apps… but these “kids” are living in the future.